tokio::task::spawn_blocking) doesn't work very well.wrk2 and the web server were running on the same 6-core (12 hyper thread) machine. Without these two thread offloading changes, my server handled 1000 rps with a mean latency of 3ms, p90 of 4ms, and max of 6ms. At 5000 rps it had a mean latency of 8ms, a p90 of 22ms, and max of 58ms. However, once my two commits were in to move my db calls + markdown-to-html logic off of the Tokio runtime, the server was starting to fall apart at 1000 rps, sometimes not even completing that workload.tokio::task::spawn at places I want concurrency. Seems a little silly because I'd have to wrap the whole thing in an async block to make a future. It'd potentially help for the two queries I can do concurrently. On the whole, I'm not actually sure if the overhead of shifting these sqlite queries over threads is more than just running the queries synchronously in line but that was why I was experimenting a bit. Another approach might be to keep using spawn_blocking but introduce some concurrency control + load shedding on the calls before scheduling the blocking tasks. I ended up trying out the rayon approach.rayon for a CPU-bound thread pool + a tokio::sync::oneshot to communicate the result back into a Tokio task. Before gluing together these bits, I figured I'd see if someone had already done something similar and found tokio-rayon. It provides the convenience function I needed to submit tasks to the rayon global executor (by default, sized at the number of logical cores in your system). It actually has both LIFO and FIFO versions of spawn. The downside was, this wasn't sufficient for solving my server going non-responsive under load when offloading these particular calls to other threads.Error(None) coming from within my application. For whatever reason the logs weren't providing a module or file here (or I missed it). I had already been experimenting with moving some stuff over from the log crate to the tracing crate and log messages can be converted to tracing Events just by changing the library I used, no other code changes required. This pointed me at the module and function at least and this is when I started suspecting my database connection pool. A few edits to the log message I suspected confirmed that suspicion.diesel::r2d2). The default connection pool size was 10 and the default timeout for acquiring a connection was 30s. This meant if the connection pool was overwhelmed by connection requests, a bunch of those would stack up and all fail after 30s. This partly explains why my changes made the server go unresponsive! I was requesting potentially more connections than before my code changes.

I haven’t done one of these in a few years but feel like this one warrants it.

Unsurprisingly, 2020-2022 were pretty quiet years for my running. 2018 was a strong year for me but unfortunately ended in injury. 2019 was a year of recovery and rebuilding, and then 2020-2022 turned into much the same. I was lucky to be healthy throughout that time and while I wasn’t training for very many races, I was running pretty consistently. Not a lot, but consistently. A silver lining was that it helped me rebuild habit and consistently get out there even during a time of low motivation. Sure, I put in a decent training block for CIM in 2021, but otherwise I was mostly “just running” without much in the way of big weekly mileage or even too many workouts. 2023 was supposed to be different, I planned a return bigger training blocks and goal races with PRs in mind. In many ways it was a big year and I have a lot to be thankful for that motivates me for the coming year.

Unfortunately, I didn’t run CIM after all of that work. In a trip to SF in early Nov I injured my knee. The day after running my 10 mile PR I flew to SF and then in the evening I stubbornly pedaled an e-bike with a dead battery to my hotel while carrying my luggage. This combination of things proved to be too much for my right knee and I had a lot of pain in it for a few weeks. After a few weeks of relative rest I had to accept that CIM wasn’t happening (and also that I wouldn’t make a quick enough recovery for any other early winter races).

I’m ending the year having run just over 2200 miles (2206.7 as of this writing, which will be the total unless I decide to risk running on fresh snow with my knee that is still not totally healed). This is far and away the most I’ve run in a year since at least 2013. I’ve had recent years in the 1700 or 1800 miles range but haven’t crossed 2000 miles for the year since at least 2012 or 2013.

I’m currently able to do ~3 mile runs more or less pain free but I still don’t have a full idea of what a recovery timeline looks like in order to begin training again so it’s hard to set goals for next year. During marathon training I was thinking that next year would be good to get back to shorter races (5k to 10k) and work on speed as that was a particularly weak point in my training this past cycle (strides and even 10k pace felt *hard*). But I also now feel like I have unfinished business with the marathon given that I had essentially my best ever marathon training cycle but didn’t get the chance to fully express that fitness. I’m also coming away with the lesson that even though I don’t feel like I over trained (not ending my cycle with an over use injury from running is a big win for me), I still need to be cautious with other things I’m doing when I’m putting my body under lots of stress for big training cycles. I’m sure that cumulative fatigue contributed to my injury during that fateful bike ride.

So, I’m thankful for a 2023 that showed me I can still put in big mileage and recover after many many years of being unable to put together this type of training. I’m also thankful for a 10 mile PR.

In 2024 my priorities are:

1. Recover. Be patient and get back to consistent 5-6 days a week of running, no matter how conservative I have to be to get there. It’s not worth rushing it and ending up in another injury cycle.

2. Be healthy for GLR. It’s headed back to the UP next year and a brand new day 3. If I can’t be healthy enough by July, something very serious is wrong.

3. Re-evaluate other race goals as health and fitness allow but I’m still holding out hope to be able to put together a strong Fall 2024 training cycle of some kind.

While I started or made progress on reading many books in 2022, unfortunately this year was thin in books that I completed. Still, there were a few that stood out for me. I'm going to focus on just a few fiction books that I enjoyed this year.

This was the conclusion to her A Chorus Of Dragons series. I'd mentioned another book in this series in my 2019 year in books and haven't written another similar post since. I thoroughly enjoyed the entire series. The characters were interesting and the world building was unique. It had quite a broad set of places and people and, if anything, was slightly too large for me to keep in my head in the span between book releases so I imagine it would be easier to follow along with now that all of the books have been written and released.

The other day when I was at the book store, I stumbled on another book by Lyons, The Raven Tower. It was an easy purchase even though I don't know what it's about!

The Poppy War is a story of generations of recurring conflict between two countries with hints of the supernatural early on until really expanding on that aspect of this world in the mid and late parts of the book. Overall I enjoyed the story and mythology that were built up in this book. Since it was a book with war, I was prepared for it to be violent but it was quite graphic and gruesome at points and didn't know that going in. Knowing that now, I still would read it but it's probably worth knowing more than I did before starting. I'd classify it as even more violent and brutal than Game of Thrones was (both the books and TV show).

This is, I think, the 2nd to last book in the Cradle series. I think the last few books have really hit their stride and I'm looking forward to the next one. Both this series and the next are easy reading (I get through the books fast, too fast) but also very satisfying. Just the kind of fun fantasy book I like to read for entertainment, good pace and doesn't feel like it's high effort to consume.

I'm somewhat sad the series is coming to an end next year but I hear that there's other series by this author - perhaps I'll check those out.

This, series has a mix of fantasy book + video game magic system feel that is pretty interesting. I know that is what the author is going for and it's not a style I'd encountered before his books. They're quite fun and I like what is happening with multiple series (another being The War of Broken Mirrors) occurring in the same world, on differing timelines and with different writing styles.

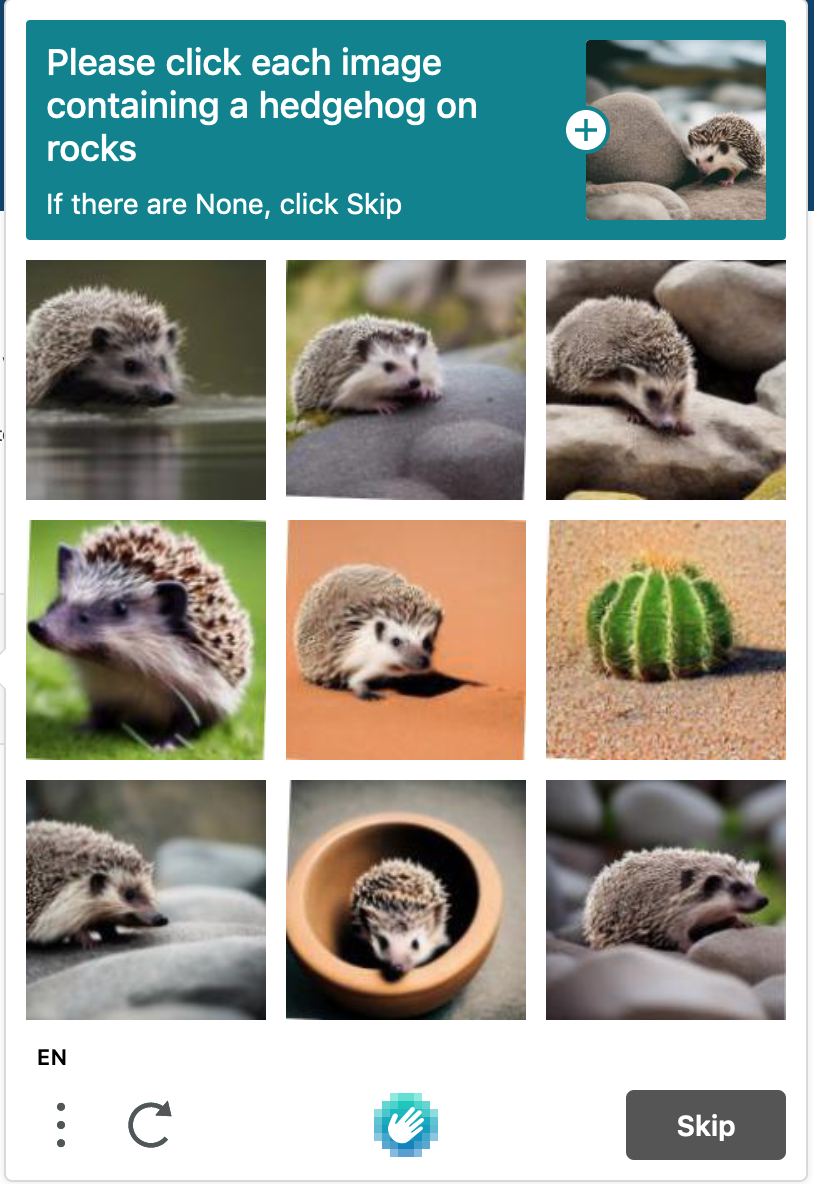

Testing media uploads on the rewritten site with an upload of the cutest captcha ever

I've been doing the first few days of this year's advent of code with awk (GNU awk to be precise). The first day I learned some new things about arrays with awk.

The first was that you can set a global variable called PROCINFO["sorted_in"] to determine the order that arrays (which are really associative arrays not "vectors", I believe) are iterated over for for-loops. There's a bunch of options for sorting array values by string or numerically or by type and the options have ascending and descending variants. One caveat is that since this is controlled by a global variable, you will change the default for later loops unless you preserve the current value and then restore it. The docs are very good.

The second piece was that the array sorting functions (asort and asorti) can also be provided with the same options to adjust sort ordering (e.g. to treat array values as numbers instead of strings).

That second bit was what I needed for my AOC solution on day 1 but both of these are good to know.

My site now supports stripping Exif tags (and others) on media when uploaded via the Micropub media endpoint I've implemented. This was important to me to ensure that, when possible, identifying information like location is removed from any images I upload. This is doable before uploading the images but integrating it into the web endpoint makes it much more likely that it'll happen (especially if I ever get the micro pub implementation up to the point where something like a mobile upload can happen).

The code itself to do so within the upload handler was pretty straightforward so the more interesting bit is how I got to the point of being able to write that code and also packaging it up once it compiled.

I did a fair amount of looking around for solutions to this problem. Since my server is implemented in Rust, I was looking for Rust bindings to strip tags as reliably as possible (and didn't want to have to go down the rabbit hole of implementing it myself, for now).

There were some pure-Rust image crates but none seemed to have support for what I wanted to do. There was also `magick-rust` which provides Rust bindings for the ImageMagick MagickWand library. I would have preferred bindings to other portions of the ImageMagick API as the MagickWand stripping bindings seem to do a bit more other stuff than I really wanted but I still wasn't quite willing to build bindings to the rest of the API. The goal was stripping tags without implementing a whole lot of stuff in Rust in order to do so.

One problem was that the strip functionality provided in this API wasn't yet surfaced in the `magick-rust` crate. This seemed like something I would be able to add without going down a huge rabbit hole so I proceeded with this approach (the alternative at this point, given no other Rust options, was to shell out to ImageMagick itself so this was preferable). It also gave me a chance to learn about `rust-bindgen` as that is how this crate was built. A small PR later, the crate would support stripping tags! Excitingly, that PR was quickly accepted and merged.

Along the way, I also learned that default `rust-bindgen` cargo features involve building `clap`, a CLI parsing crate that's somewhat large (but also quite handy). This was surprising to me and stood out as my server doesn't currently have that crate as a dependency, so I dug in there. I learned that the default build of rust-bindgen also builds it as a CLI which requires that crate. I haven't made a PR out of this change yet but it seemed fairly easy to turn off that extra functionality, so I did so in a branch.

The other issue I ran into with this "small" bit of functionality was that I needed to be able to have the proper version of ImageMagick available at runtime so that my server could actually do the stripping (this dependency is not statically linked). This lead to a host problems.

First, the apt-based repositories the existing image build had access to were still on a 6.x version of ImageMagick. The `magick-rust` crate requires a build in the 7.0.x range or later. At first, I tried to work around this by building ImageMagick from source during the image build. This didn't seem too bad initially, I didn't have a whole lot of trouble getting the source downloaded, built, and installed within my Dockerfile. The trouble was, even once that was happening, the server was erroring when the image tag stripping code was called.

Looking into this some more, it turned out that a bunch of "optional" ImageMagick dependencies were not available (and had been mentioned as such during the `configure` script) and this meant that it didn't know how to read a bunch of common image formats. I had a really rough time trying to make all those deps available to the build. I tried to list them all out in an `apt-get` command, did things like installing the 6.x version to make sure its deps were available, and more, all to no avail.

Separately, I've been experimenting with Nix package manager (and NixOS) recently and had no problems building and running the server from within a `nix-shell` environment. Nix had a new enough ImageMagick and making the symbols available to the server at runtime wasn't really much of a problem. I knew that there was a way to build Docker images with nix and so, failing all of my more traditional attempts at packaging my server into a container image with its new ImageMagick dependency, I decided to look at packaging the server in a Docker image with nix.

This first involved being able to build my micropub server via `nix-build`. I'd written some other package derivations at this point so it seemed feasible but I hadn't done any Rust packages yet. It turned out not to be too bad, I ended up primarily following the `naersk` readme (and transitively, `niv`'s too). That paired with some additional suggestions from the NixOS wiki and Xe's blog (which has also been quite helpful with Nix in general) got me going. Once I had the project being built via `nix-build`, adding the Docker bits was relatively straightforward! (I leaned on essentially the same resources there, with the addition of the official manual).

Once all that was said and done, I had a minimal Docker image that could run my site and (finally) strip Exif tags from images that I upload!

I have more thoughts about Nix package management that I'm working to write up. It appeals to me in quite a few ways but some of its requirements also make me question whether I want to stick with it. In a lot of ways, the problems are endemic to package management or configuration management tools in general and so I might just have to decide to live with this one given all the benefits it brings. More on that soon, I hope.

Hennepin Ave is a major street here in Minneapolis and due for reconstruction. The city has been through multiple rounds of design and public comment and has arrived at a single proposed design that is currently open for public comment and is due to be up for review by the city council in the next few months.

Tonight I submitted a public comment on the design and figured I'd share it here as well. Below is an unedited form of that public comment, with a few hyperlinks added. It could be edited better but my emphasis was on getting the comment in to the city over making the comment perfect. For those who want more information about the specifics of what is changing, all of the project materials are available here.

--------------------------------------------------------------------------------------------------------------------

I strongly support the 24/7 bus lanes as well as the addition of a raised bike lane in the project area. There is good evidence and policy reason to move forward with these elements. First, a large percentage of people using Hennepin are on buses at rush hour (upwards of 40 to 50%) at all hours of the day. It is respectful of those people's time to prioritize buses that move more people with less space over individuals in personal vehicles. Second, the city's Transportation Action Plan states that Hennepin is designated to be part of the all ages and abilities bicycle network by 2030. This reconstruction is the time to follow that policy and add protected bike lanes. Finally, I really like that the north side the project will connect up with the Bryant Ave bike bridge to connect with the rest of the city.

Improved pedestrian facilities are also very important for Hennepin Ave. Crossing 4+ lanes of traffic can be dangerous when drivers are not paying attention and the traffic noise makes it unpleasant to walk down Hennepin. Strategies employed in the proposed plan including shifting some of those lanes to other uses (like buses) and decreasing the distance to cross Hennepin should improve that situation for pedestrians and overall lower injury rates on the street. Per the crash analysis done, pedestrian and bicycle crashes are at a especially high rate compared with the rest of the city. As someone who frequently traverses Hennepin on foot or by Bike, I am very concerned about improving this situation.

On parking, personally I am not concerned by the minor removal of parking along Hennepin. I usually walk and/or bike to business on Hennepin. However, in the cases I do feel the need to drive I can say that, as someone who lives within a few blocks of Hennepin within the Wedge, parking on Hennepin not something I would ever want to do even if a spot were available. This is due to the narrow lanes on Hennepin paired with the speeds that drivers employ along the street - it just does not feel like a safe or convenient place to park. I can also say that my personal experience matches the parking study in that there is often plenty of parking available within the a block or two on each side of Hennepin, so removal of a few parking spaces should not significantly affect accessibility to businesses for those that choose to drive.

One aspect of the plan that I do not like, and question whether it will hinder the design's effectiveness towards meeting city goals, is in maintaining the two left turn lanes onto northbound Hennepin from Lake Street. The dedicated bus lane does not start until two blocks north of that intersection, at the Uptown Metro Transit station. It seems to me that congestion at the Lake and Hennepin intersection will hinder the goals of the city and Metro Transit of providing reliable transit capabilities with BRT . Since there is no dedicated bus lane this intersection could delay service and make it more unreliable, which seems counter to the stated goals.

I understand the intent is to leave the two lanes due to volume of traffic making that turn, however it seems to me that reducing driving lanes just two blocks after the turn will still encounter the same congestion problems meant to be avoided by leaving the two left turn lanes, just on Hennepin instead of Lake St. I'd like to see more information on why planners believe this part of the proposed design will not hinder buses given the priority to be given to them. Has a traffic study been done on this option vs the reducing the left turn to one lane? It seems like the current design would result in more congestion and/or personal vehicles driving in the bus lane due to last minute merging than reducing the number of turn lanes from Lake on to Hennepin, thereby adding back pressure in high traffic times.

Overall, I think that this plan is a well balanced one meeting the city's adopted policies and many of the city's stated goals. It seems to have taken into account much community feedback on all aspects and I am excited for a more multi-modal Hennepin that is safer and more pleasant! The Metropolitan Council's interactive maps of Census data show that the reconstruction area touches or is near the 3 neighborhoods of highest population density in the city. These areas deserve a safe, multi-modal, enjoyable corridor for every day activities and this proposed design does a lot to get us to that goal.

After a very long time (with much starting and stopping of work) I finally got media uploads working on my micropub server!

In celebration, here's a picture of the bunny that hangs out in my yard:

With that out of the way, I thought I'd describe a bit about how this works and some of the challenges I hit along the way (including in writing this post!).

First, I had to implement the media upload portion of the micropub spec. This wasn't too bad but I needed to be able to store a few things:

This was broken out into two parts. For storing file data itself, I created a separate server from my micropub server. I called this rustyblobjectstore (terrible name but whatever). It's a thin blob store API meant to abstract over any particular file storage details. The commit I linked at the start of the post goes into more detail but the idea is that I can easily change file storage backends through this service without the micropub server needing to know anything about that.

For now, as an experiment in how this works, I'm just using a sqlite database to store the file data.

For metadata about the media, I created an additional table in the micropub server's database. This is used to store content type, a content hash, as well as created/modified dates. The content hash is used in the media URLs on the micropub server as well as the key for the object store API so it's kind of content-addressed storage (kind of).

Implementing all of that was fairly straightforward and I had it working months ago. One of the first things I hit, though, once I had that all working was that the server library I was using sent headers as lower case ("location") whereas the client I was using to test uploads checked only for camel case header names ("Location"). This meant that the client I used to test never saw that the image was uploaded properly. It additionally had the fallback behavior of encoding the image data as base64 and including it in the body content of the post if the upload "failed" so this made observing this failure pretty hard without actually doing a publish and ensuring the body of the new post looked right (didn't contain a giant based64 encoded image blob).

I tried working around this a few ways, specifying specifically an uppercase header name in application logic (didn't matter) as well as trying to rewrite the header with Nginx config (also didn't work although I never figured out why on this one). I also found an open pull request on the client to fix this behavior but that did not get merged during this time.

I considered writing/configuring a totally separate proxy server with the sole purpose of doing that but that seemed silly and toilsome. So that's where this implementation sat for months. At some point I became aware that the underlying libraries for the web "framework" I use now supported the camel case header output but I still let things sit. I didn't see an easy way to wire that into my server without making a modification to the framework.

Finally I realized I could configure the server separately from the handlers and managed to enable the config option I needed.

I was then finally able to test the image upload end to end and see it working!

Some things I realized when testing though:

Anyway, this allowed me to merge a long-running branch and desired feature into my micropub server. Maybe I'll post more images now. Maybe it'll just unblock me from implementing more desired stuff on the server itself since I won't have to deal with maintaining that long running feature branch.

Today I learned about a builtin macro in Rust that does this without adding additional lines or requiring formatting a string with the contents of some variable(s): The dbg macro will accept any expression, print it, and also return the result:

Prints and returns the value of a given expression for quick and dirty debugging.

let a = 2; let b = dbg!(a * 2) + 1; // ^-- prints: [src/main.rs:2] a * 2 = 4 assert_eq!(b, 5);

If you're writing a Rails app and use ActiveRecord, this is a potential pitfall to be aware of:

Let's say you have some model, MyModel, that you want to do a bulk lookup of based on a set of IDs that come from elsewhere. You'd do something like:

models = MyModel.where(id: some_array_of_ids)

This will work just fine. However, there is a bit of a lurking performance cliff with this approach. It turns out that, surprisingly, ActiveRecord doesn't de-duplicate the array of IDs before constructing the SQL query text. That is, if you happen to have an array with 5 IDs but they're all the same ID then you'll end up with an underlying query like:

SELECT * FROM my_models WHERE id IN (7, 7, 7, 7, 7);

On an array of just 5 elements this isn't too much of a big deal and doesn't hurt correctness in any way. You'll still get back a either 0 or 1 results depending on if ID 7 exists in your table. However, once you're potentially looking up tens, hundreds, or thousands of IDs at once then you might hit some larger performance problems.

At least on the database I'm most familiar with running in production, MySQL, it seemingly doesn't optimize this query in the way you'd expect and it could take much longer than just looking up the single ID of 7 (or if your array is much larger and you have multiple IDs in it, all duplicated, this can get quite bad). Even if your database does optimize this query, the query you're sending over the wire is still larger than it should be.

So the safer, more performant way to query for a list of IDs if you don't already know that they are unique is to first ensure they are before your query:

models = MyModel.where(id: some_array_of_ids.uniq)

The other week, a new teammate asked about my approach to resolving bugs. This was in the context of receiving a bug report (e.g. in the form of a ticket, task, etc) and so it might be slightly different than debugging in a broader sense. Still, I realized I had never formally written out (or discussed) debugging steps before and so thought it might be worth writing down more broadly. Writing it down made me think about and articulate what exactly I would do where before I might have had a mental model or internally intuitive process to follow but not in a well-defined way.

This might seem like it's very straightforward but in thinking about it more, I think it's a form of tacit knowledge in that you build it up over time but don't often read about it, discuss it, or otherwise think about it.

I listed out some steps from receiving the bug report through to resolution:

1. Read the bug report and understand the incorrect behavior as well as the expected or desired behavior. Check whether there were steps provided for reproducing the bug.

2. Try to reproduce the bug on your own. This could be in your local development environment (ideally) or in a staging/production environment if need be. If there were reproduction steps included with the bug, use those steps. Otherwise, try to recreate the conditions for the bug on your own. If you can't reproduce (or can't do so reliably) it then debugging and fixing it will be difficult or impossible.

3. Once you can reproduce it, you know the bug still exists. The next step is to start determining what code relates to the behavior you were just seeing and to read the code. If it's a small codebase or one you know well, finding and reviewing the code might be straightforward. If it's a particularly large project then there may be some searching required (either locally with tools like git-grep, ripgrep, editor search tools, or web-based tools like Github code search and livegrep).

4. Once you have a rough idea of the code related to the bug, the next step is trying to understand what behavior is triggering the bug. This could involve reading the code to understand some logic, figuring out why incorrect data is being passed in or produced, writing a test case to trigger the bug in code automatically, or just tweaking code and running it to experiment and see what happens (perhaps while looking at or adding logging or other diagnostics). This process could be quite involved depending on the complexity of the bug!

5. If you've found where the bug is in code and understand it, now you can try to implement a fix! Sometimes this is something like fixing off-by-one logic errors or similar minor mistakes but other times it can be much more involved. Verify that your changes fix the bug by running through the reproduction steps and seeing the bug is now gone. Further, and where possible/reasonable, implement a test case to automatically verify the bug is fixed and to prevent regressions. I'd be remiss if I didn't also mention that you should spend time trying to understand why the code was written in the way that it was in the first place. It could have been an intentional decision and understanding if that decision still makes sense is part of (attempting to) resolve the bug. Tools like viewing commit history or old tasks/tickets can often help with determining this context if you are not sure of it.

6. Create one or more commits with your bug fix (and ideally your new test case(s) as well). If the project you are working on has a code review process, follow the workflow for creating a new code review.

If you can get through all of these steps, chances are you've fixed the bug! Following these steps isn't always as straightforward as this short description might sound. Reproducing a bug, finding the relevant code, and figuring out why that code is behaving the way that it does are all worthy of longer posts of their own. Lately Julia Evans has been talking about debugging a lot on Twitter lately, sharing both some really good tips for debugging as well as describing why it can be a difficult process. Here's a blog post about that that I thought was very good.

Even once you've done some debugging and understand a bug, you might not easily be able to fix the bug! Sometimes there will be extensive refactoring needed to address the problem in a clean way or there could be architectural limitations that make fully addressing the bug difficult. That won't always be the case though! I've found that debugging is a great way to learn about a system, likely because it forces you to read lots of code!

The other weekend I decided to try out Nelson Elhage's llama project which is a tool for offloading computation to AWS Lambda. This post is going to be a collection of my thoughts about the project and its potential.

A while back I had read a couple of the blog posts / newsletter entries that described llama and was intrigued by the tool but at the time the focus seemed to have been distributed compilation of C++ projects. I'm not doing so much C++ any more and so didn't have a strong use for that at the moment and set it aside but the idea stuck in the back of my mind. At some point this came back to mean and I started wondering if there was something like GNU Parallel for distributed computation but on AWS Lambda where the machines didn't have to be waiting for you 24/7? What I'd not remembered was that llama is a general tool that can do just that!

A hobby project of mine lately has involved fetching a number of CSV files daily and doing some light processing on them (sort of git scraping). This was a project tracking the data my state publishes around COVID-19 vaccination rates across multiple dimensions. While it didn't involve a ton of CPU bound work, fetching CSV files in parallel and doing the small amount of processing I do seemed like a great way to poke at llama once I realized it had the ability to distribute arbitrary work.

With something like GNU Parallel you need remote machines sitting around ready to do compute though. With lambda, it's on demand and can scale to very high parallelism for most purposes. Having such a tool could facilitate every day compute tasks that are expensive (slow locally on an older laptop, or drain battery life and create heat, or some combination of those) being done remotely. Assuming a fast enough internet connection, that is. Of course, things that take less time to compute locally than uploading and downloading the input/output to and from S3 might make less sense to do remotely. Overall, I'm excited about this concept - it provides a way to scale compute (or other things, like fast network access) without being tied to a powerful machine. After spending time with it, I think llama is a good early implementation of this idea.

I'm going to share some notes now about getting set up and my initial attempts to use llama. Overall, the README is sufficient to get started.

I did run into a few issues building/installing the command line tool on my Mac machine (tried both Go 1.15.x and 1.16.x)

Setting up the AWS Credentials and CloudFormation stack was very seamless once I had the tool built and installed!

Use 'git show <rev>:<path>' to output a single file's contents from a particular revision. This can be redirected into a file or piped into other programs, etc.

Something I have occasionally wondered is whether git provides a way to read a single file from a particular commit/revision without checking out that commit (and thus changing the state of the whole working copy). It seemed like something git should support but in brief searches and man page readings, never found a way to do so.

Today, I finally read through the gitrevisions(7) manpage (referenced by git-show's) and saw the <rev>:<path> syntax:

<rev>:<path>, e.g. HEAD:README, master:./README

A suffix : followed by a path names the blob or tree at the given path in the tree-ish object named by the part before the colon. A path starting with ./ or ../ is relative to the current working directory. The given path

will be converted to be relative to the working tree’s root directory. This is most useful to address a blob or tree from a commit or tree that has the same tree structure as the working tree.

This seems to be exactly what I needed and it turns out git show supports this syntax for outputting a particular file!

Yesterday I read Dealing with Non-ASCII Characters and the talk about curly quotes copied and pasted from Google Docs or Slack reminded me of a "fun" problem that I used to have to deal with occasionally that was along similar lines. It had to do with copying and pasting a full command line invocation complete with flags and arguments out of a tool that was commonly used on a project I was working on. Maybe you’d paste in a fully formed command as an example into a design or a set of FAQs or something – however when someone copied and pasted it back out a flag that might’ve been --debug turned into —debug. In other words, the double hyphen was getting turned into an em-dash.

Alone, this isn’t really a huge problem but might not work as expected. If you use some certain command line parsing libraries/frameworks that make your life easier in other ways, then this situation might result in failures or maybe even an unhandled exception and a stack trace being spewed out pointing at parsing code that’s inside of the framework where it’s reading argv.

What to do?

Depending on the library being used and exactly how it parses the parameters, there may not be an easy way to handle exceptions like this from within the framework. You might have to wrap your call that "enters" the framework in your main function with some exception handling in order to be able to avoid these unhandled exceptions. Then you could provide a specialized "are you sure you used valid ASCII characters in your command?" error message. However, as Julia Evans points out: unicode is valid in command line arguments! Unfortunately I don’t really have any fix to offer for this situation if your framework doesn’t catch it for you. This post is more a word of warning in case you or someone you know comes to you asking what’s going on with a command that was copied and pasted from elsewhere – I had to debug this for people a number of times in the past. Probably the easiest "fix", if you can change the place the command was copied from, is to ensure double dashes weren’t "helpfully" turned into an em-dash.

Jonathan provided a cool regex at the end of his post though. It will help with detecting non-ASCII characters and describes a trick for remembering the visible range of the ASCII table. It won’t help with the exact situation I describe above but if you’re trying to figure out why some JSON you copied and pasted is now invalid or something, it’s definitely worth keeping in mind.

The other day I was working on a small web project (more on this soon) and was using Warp. I wanted to impl some From<T> conversions for certain error types into my custom type to make error handling a little less clunky. I ran into some problems with doing that and I must not have realized it at the time but I believe I was on Rust 1.40. I saw this morning that Rust 1.41 relaxed some of those restrictions so that you can implement traits on foreign types as long as the trait is local to your crate. While this didn’t help my particular case, I might’ve been able to use it if I refactoring things around.

I also noticed that Rust 1.42 had already been released as well (March 12th). It seems to have brought some nice improvements to pattern matching including allowing subslices in a slice pattern and the matches! macro. The former would probably be useful in the type of byte matching I was working with recently in my photo sorting tool (see part 1, part 2 coming soon).

I guess it’s time for me to subscribe to the [Rust blog](https://blog.rust-lang.org/) in my Newsblur account!

As an update to my previous post, I wanted to say that I hooked up my keyboard and mouse to a KVM that I had lying around in a box. This makes switching away from the work computer at the end of the day much easier (no more crawling under my desk).

Friday marked the end of three weeks of 100% working from home and about a week and a half of Bay Area shelter-in-place being in effect. The weekend started to feel like staying at home was “the new normal”. Overall, I think that I have adjusted to the working remote part of this experience rather well but less so to the other parts of shelter-in-place (nor do I hope to ever fully adjust to those…). I even like certain aspects of working from home more than being in the office: easy impromptu Zoom meetings w/o booking a room, more control over my space/desk, a more relaxing and less urgent morning overall. I even purchased a webcam to make meetings from my desk easier!

Of course, I miss seeing people in the office daily and sharing things like lunch or tea with my coworkers. It would certainly be nice to have a dedicated space for working as well. One side effect of not having that dedicated space (as well as more time at home overall) is that I’ve noticed it’s much easier to let work happen after working hours. For example I needlessly jumped into debugging a minor incident at work when others were already on it and I was not paged (though I am on call this week for other systems).

The little I’ve been out in visiting businesses, it has also seemed that people are adjusting and taking reasonable precautions: Grocery stores like Luke’s Local and Safeway have instituted “one in, one out” rules to limit the number of visitors in their stores and there are lines outside. My favorite coffee shop in the city, Bernie’s, had chairs blocking direct access to their bar area to create more space between those working and customers. People broadly seem to be more respectful of the “6 feet” rule now as well.

For working days, I have been trying to remain in a decent morning routine: make tea, go for a walk, have breakfast, and start work either after breakfast or while eating it. I do need to get better about "after work" routines though. I’ve also been either going for a run or a second walk when I sign off of work for the day. One problem I have been encountering is that it is too easy to leave my work computer plugged into my monitor and keyboard at my desk – partially because I need to crawl behind my desk to swap it back to my other computer) and it is also too easy to leave Slack up and get sucked in to work if something comes up. I’m trying to improve the after work routine this week.

All of that said, I worry this pandemic will continue to get worse in the coming days and weeks – both in California and in the country as a whole. I think this has affected my overall productivity in work and everyday life. On the one hand I know that I am incredibly fortunate and privileged to have a job that I enjoy and can continue doing in the face of all these societal changes. Especially considering that 3 million+ people applied for unemployment insurance in the US just last week. On the other hand, things being less productive adds to my personal worry a small amount ("I’m not working like the normal me"). That is OK. I try to remind myself that things aren’t supposed to be normal right now and am fortunate that work is supportive of adjusted expectations in this time.

The bright spot is that early indications are that shelter in place is working for the Bay Area. I’m happy that we moved on that early. I’ve been following the John’s Hopkins daily situation report and, more recently, the SF Chronicle’s case tracking graphs for CA and the Bay Area. The Chronicle uses a combination of state/local health department data as well as that from the COVID Tracking project. Early indications aside (fingers crossed that they are true), California’s 60K pending tests are a big unknown.Here’s to the next two to four weeks seeing marked improvement in the Bay Area, followed by California and the country at large...

This is the initial post in what is intended to be a series where I write about what I learn while reverse engineering photo file formats with the goal of reading metadata (e.g. Exif). It isn’t meant to be a definitive guide to the format but rather documenting the thought process of taking the format apart by only looking at file data rather than reading specifications, library implementations, or other more qualified information sources. Why? Because sometimes using hexdump and hacking together a custom tool is just more fun.

Towards the beginning of the year I was interested in writing a tool that would sort my photos into a directory tree that followed the year/month/date/<file> format. I have heard of such tools myself but wanted a little project I could work on for myself. To make it more fun, I wanted to see whether I could do this without any library support for reading Exif data out of photos and instead parse the needed information myself. It seemed to be the sort of thing that would be perfect for Rust (or any memory safe language, for that matter): reading a set of file data with unknown formats from usually trustworthy but sometimes potentially untrusted sources. I figured that I could start by implementing my sorting program but if I finished that and still had motivation to learn more about these formats that I could continue and write a generalized library. Whether I have that motivation is still TBD. I’ll also note that I wanted to rely on the date embedded into the file rather than the file metadata provided by the filesystem (such as ctime) as that can be unreliable in certain circumstances.

Before I got started I did some basic searching and learned that the CR2 files that my Cannon cameras output in raw mode are only one of multiple "raw formats" and that they are not a standardized format. That’s good to know, there’s no spec to speak of anyhow! I did learn that there’s a program called `dcraw` which is a C program (not library!) maintained by Dave Coffin that supports most raw formats. This is more focused on processing the photos rather than extracting metadata. While I found that interesting, I didn’t use it as a base for my program. I was open to researching further if I got stuck but so far have not needed to. With all that out of the way, let’s dig in!

First off, I leaned pretty heavily on standard Unix tools for extracting the info that I wanted. hexdump and strings are your friends for this type of work. In this post I mostly focus on the output of hexdump but any hex editor would also do. The following is a hexdump of the first few hundred bytes of an image I took, the header does go on for a fair bit longer and I see things like the lens that I used and the aperture setting in that later output but for now I’m most concerned with extracting the date/time of the photo which is near the start of the file.

$ hexdump -C -n 320 photo.CR2

00000000 49 49 2a 00 10 00 00 00 43 52 02 00 4a b2 00 00 |II*.....CR..J...|

00000010 12 00 00 01 03 00 01 00 00 00 70 17 00 00 01 01 |..........p.....|

00000020 03 00 01 00 00 00 a0 0f 00 00 02 01 03 00 03 00 |................|

00000030 00 00 ee 00 00 00 03 01 03 00 01 00 00 00 06 00 |................|

00000040 00 00 0f 01 02 00 06 00 00 00 f4 00 00 00 10 01 |................|

00000050 02 00 0e 00 00 00 fa 00 00 00 11 01 04 00 01 00 |................|

00000060 00 00 7c 18 01 00 12 01 03 00 01 00 00 00 01 00 |..|.............|

00000070 00 00 17 01 04 00 01 00 00 00 34 61 1e 00 1a 01 |..........4a....|

00000080 05 00 01 00 00 00 1a 01 00 00 1b 01 05 00 01 00 |................|

00000090 00 00 22 01 00 00 28 01 03 00 01 00 00 00 02 00 |.."...(.........|

000000a0 00 00 32 01 02 00 14 00 00 00 2a 01 00 00 3b 01 |..2.......*...;.|

000000b0 02 00 01 00 00 00 00 00 00 00 bc 02 01 00 00 20 |............... |

000000c0 00 00 c4 b2 00 00 98 82 02 00 01 00 00 00 00 00 |................|

000000d0 00 00 69 87 04 00 01 00 00 00 be 01 00 00 25 88 |..i...........%.|

000000e0 04 00 01 00 00 00 72 aa 00 00 74 b1 00 00 08 00 |......r...t.....|

000000f0 08 00 08 00 43 61 6e 6f 6e 00 43 61 6e 6f 6e 20 |....Canon.Canon |

00000100 45 4f 53 20 37 37 44 00 00 00 00 00 00 00 00 00 |EOS 77D.........|

00000110 00 00 00 00 00 00 00 00 00 00 48 00 00 00 01 00 |..........H.....|

00000120 00 00 48 00 00 00 01 00 00 00 32 30 32 30 3a 30 |..H.......2020:0|

00000130 32 3a 30 31 20 31 34 3a 33 32 3a 31 34 00 00 00 |2:01 14:32:14...|

00000140 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 |................|Let’s note down some relevant metadata that we can see here. I don’t particularly need this right now for sorting but noting it for later: 245 bytes in we can see the the brand, a null byte, and the model name of my camera. Canon and Canon EOS 77D. It sort of seems like the manufacturer of the camera (Cannon) is delimited by a null after which the model is included. There are quite a few null bytes following this so it’s the model is a fixed-width string where the nulls are used to pad out to particular offsets or there’s some delimiter patten that I haven’t recognized yet.

Now for the stuff I need to do directory sorting: We can see that 298 bytes in we have the a bunch of null bytes (and a random ’H’...) and the start of our date string: 2020:0. The next line of our hexdump output gives us the second half of the date, a space, and the time: 2:01 14:32:14. Three null bytes trail that. Putting that all together we get our date/time of 2020:02:01 14:32:14!

Now, I’m guessing that the metadata fields are not aligned around 16 byte lines like our hexdump output is here. It isn’t immediately clear what, if any, line oriented alignment there might be on this data. There certainly appear to be a lot of null bytes surrounding the useful data. Perhaps it’s just a lot of data packed in at various offsets and my particular camera doesn’t populate most of the fields. 🤷

For a very brittle first implementation of date all I had to do was hard code this offset into my sorting program, read the next 10 bytes and parse that to get my directory structures. You can see the parsing logic in the first commit of my photo sorting tool. That commit only parsed and printed dates, didn’t quite sort all of the photos just yet. The second commit took the set of bytes that represented the date and wired up logic to do file renaming. If you read through that, you’ll note there was also a mode to rename using git rather than straight filesystem renames. That is because some of my photos are managed by `git-annex` and provides some extra safety on these operations so that anything can be undone so that I don’t lose any data.

It turned out that this very unreliable implementation works! But only for photos taken with this particular camera in this particular format. Some older CR2 files I have from a different Cannon camera have a really similar structure but different offset for the date. Additionally, JPG file written by both of these cameras have JPG magic number stuff prefixing all this metadata so we’ll have to build something more reliable. We’ll start covering all this in more detail in part 2.

I read over 25 new books this year and re-read a bunch of old favorites as well. I wanted to cover some of my favorites here as well as those that I’ve been dragging my feet on finishing even though they’re on topics I’m interested in or stories I enjoy.

Thanks to Jonathan Palardy for inspiring me to read 10 pages a day, I formed a new habit to get me reading more than ever. I’ve long loved reading new books but also long felt too exhausted to do so in the evening. By re-arranging my schedule and making time to read in the mornings, I’ve managed to make consistent progress on books I’ve long wanted to read. Since Thanksgiving I’ve slightly fallen out of my routine due to holiday season hectic schedules (and probably also some laziness) but am excited to recommit to this goal in the new year.

Here are some of the books I read in 2019 that stood out for me, in no particular order.

This was a rather interesting book about the origins of trail systems, the history of certain trails and of the activity of hiking. It covers animals and their trail systems as well as various cultures’ relationship with trails and hiking. I really enjoyed reading this one and was rather sad when I left my copy on an airplane when I was only part way through the book – the first time I’ve ever lost something on an airplane! Thankfully I managed to make it through the book before losing my second copy.

A history of San Francisco in the late 60s and early 70s up through the early 90s. When I started reading at least 10 pages a day, this was the book that I was struggling to get through. For non-fiction books I find the motivation I have towards the end of the day to engage and learn is basically gone after a day of working. That said, I learned a ton of interesting tidbits about people and places from this book!

A look through Adam Savage’s career and life as well as some methods he’s picked up over the years for keeping life organized. A fun, quick read.

I discovered Will Larson’s blogsometime last year. The first piece on his blog that really stuck with me was about systems thinking and the book that he recommended as an introduction to the topic by Donella Meadows was one of my favorite books last year so I was really excited to pick this book up.

It is beautifully designed inside and out. While it is mostly about engineering management in the tech industry, as a non-manager I found it to be helpful as well. I enjoy Will’s thoughtful discussion of various components of life on a team of people building a technology product and particularly where the traditional approach is questioned or approached in a different light. As a reader of his blog, I found some of the content to be pulled from there but there was also enough new content that expanded on it as well.

This book introduces multiple ideas in software design and explores the trade offs between some of these ideas. While I don’t agree with all of the ideas, the book effectively demonstrates that different situations call for different approaches to software design and highlights that simplicity has value.

I had somehow not read this one before. A quick read and still very applicable to today despite being published in the 50s.

I actually enjoyed this one more than volume 1 in this new series in the same world (but different time) as His Dark Materials. I always enjoyed the world of Lyra and the daemons and this book was no different.

This book, along with The Name of All Things, makes Jenn Lyons is one of my favorite new authors. As a first published book (as far as I could tell), I thought she was amazingly good at world building. The storytelling style of having a sort of book within a book and the narrator being a character recounting events was interesting and a nice, different approach as well. I was happy to see that was carried over to The Name of All Things. The timeline was sometimes a bit confusing but I appreciated how everything felt like it came together at in the end. I also always love footnotes and this book has lots of footnotes [1].

Neal Stephenson’s new book is largely a fictional fantasy book about the (maybe) not-too-distant future with AIs, robotic "presence" bodies, quantum computing, full brain scans being used to simulate people after death, and more. It also happens to raise interesting questions about the nature of the self and of death: consciousness, personality, memory, and soul vs brain.

I tore through those books that had already been published and spent a lot of this year excitedly waiting for the next installments. I love the concept of the world in these books and it is one of my favorite new series.

Here’s a short list of books that I didn’t manage to finish this year despite them being in progress for some time. These are not books I consciously decided not to finish but rather that I moved on to other books that found my interest at the time. I hope to finish these soon:

[1]: I have a longstanding TODO item to make this blog support footnotes in a better way...

There are many good options for tools that provide insights into your running programs for Linux including newer tools like writing eBPF filters against the kernel and older ones like strace, perf, and `gdb`. Today I want to talk a little bit about a feature of many Unix kernels that is often used by other tools but can also be useful on its own for resolving little questions or problems you might have. That feature is ProcFS.

I wanted to write about a couple of use cases that others may find useful in certain cases. The first is the ability to dump the command line and environment of a given process. There are certainly other ways of obtaining the command line of a process but it isn’t always easy to inspect the environment variables present at runtime. This can be especially useful when trying to debug or verify some behavior when the program doesn’t otherwise expose its configuration at runtime (e.g. via logging).

Let’s pretend that we have a process that we want to inspect. Perhaps it’s a Python process that is doing some web serving. Let’s use ProcFS to determine the command line arguments and the environment variables set within this process:

$ pgrep python

2961

$ cat /proc/2961/cmdline

python3.6-mhttp.server3000

$ xxd /proc/2961/cmdline

00000000: 7079 7468 6f6e 332e 3600 2d6d 0068 7474 python3.6.-m.htt

00000010: 702e 7365 7276 6572 0033 3030 3000 p.server.3000.

$ cat /proc/2961/environ

MYENV=ohhaiSECRET=blah

$ xxd /proc/2961/environ

00000000: 4d59 454e 563d 6f68 6861 6900 5345 4352 MYENV=ohhai.SECR

00000010: 4554 3d62 6c61 6800 ET=blah.First, we determine the PID for our process to inspect. From there we dump the contents of /proc/<pid>/cmdline and /proc/<pid>/environ (I do so both in ascii and hex so that you can see the exact format). Note that each command line argument and environment variable is separated by a null byte. Here you can see that we have a web server listening on port 3000 using Python’s built in http.server tool. We’re also able to see that there are two environment variables that in the server process’ environment and what their names values are.

I’ve personally found this environment variable dumping ability quite useful for debugging a program at runtime. Maybe you have access to the source and are observing some behavior in logs which is not trivial to reproduce and are trying to correlate that behavior back to the source. If the program derives behavior from the environment, sometimes being able to dump the exact state of the environment can be helpful in your debugging.

Another possibility is to inspect the state of a given file descriptor within a process. If you know a given file descriptor ID, you can use /proc/<pid>/fdinfo/<fd> to dump the state of the file descriptor (including the current position of a file, the mode used to open the descriptor, socket or event state, and more).

One last handy thing that comes up occasionally in debugging a hung process (especially one in uninterruptible sleep, the dreaded D state) that you can’t get relevant logs for or hooked up to a debugger is to get the stack trace of the kernel syscall that the process is waiting on, if any. Especially for processes in uninterruptible sleep, where the hang is usually I/O related, this can be helpful for getting at least some small indication of what type of thing is blocking the progress from making progress. Here’s an example from a web server that is running normally:

root@server:~# cat /proc/2411/stack

[<0>] ep_poll+0x29c/0x3a0

[<0>] SyS_epoll_wait+0xc6/0xe0

[<0>] do_syscall_64+0x73/0x130

[<0>] entry_SYSCALL_64_after_hwframe+0x3d/0xa2

[<0>] 0xffffffffffffffffWe can see that the process is in epoll wait, which should not be surprising for a web server waiting on socket events. Examples of things that could have been shown would be things like reading/writing to a socket, a file on disk, or some remote filesystem.

It would be well worth your time to read some sections of man 5 proc for more details of various Procfs features. You’re almost certainly guaranteed to learn something and it might surprise you to learn about all the various things that are able to be introspected via a file-like interface. Everything is a file, indeed.

Last week I read an OpEd in the NYTimes with the above title that I found to miss the mark a fair bit. It may even result in discouraging people from setting up this important protection on their most critical accounts, which would be a most unfortunate outcome. You should enable two-factor authentication on any account that you can. The end of the article concludes with a call for more data about the effectiveness of two-factor against various forms of account compromise which I can entirely agree with; however, this is buried deeply beneath paragraphs casting doubt on the effectiveness of two-factor auth.

The primary two complaints seem to be that two-factor authentication cannot protect against sophisticated phishing attacks and that we have not enough information about its effectiveness, given the perceived inconvenience. Admittedly, it does state that these facts alone are not enough reason not to enable it, but that is after seven paragraphs describing phishing attacks against various types of account but then equivocates by introducing the complaint about lack of data for its effectiveness.

My primary issue with this is that it seems to conflate phishing - one type of account compromise attack - with the broader class of account compromise attacks. There is not a single mention of the protection that two-factor authentication can provide against a compromised primary credential (i.e., a password). The protection provided against credential dumps from breached services cannot be ignored. Even if you follow other best practices and do not reuse any passwords across websites, there is often a gap of months between the time a breach happens and the time it is known publicly. Without two-factor authentication, an account on a service that has had a password breach is even more vulnerable than it would be without the second factor. Assuming no password reuse, I would go so far as to say that two-factor authentication vastly mitigates primary credential theft since it would require a secondary attack (e.g., phishing, phone compromise, etc.) to bypass the second factor.

Is the author right that some two-factor authentication methods are vulnerable to phishing? No doubt about it, yes they are. However, even the weakest forms (e.g., SMS as a second factor) can mitigate primary credential compromise as described above. There are also two-factor auth methods that are much stronger against phishing attacks than SMS or one-time passcode generator devices/apps. U2F devices are one such method. They prevent phishing as there is no passcode to phish - they work via a challenge-response mechanism that fails on a phishing website even if the human doing authenticating is fooled. Another lesser protection is a push-based mechanism like Duo Push where you have a chance to inspect the login request. Chances are that it’s still possible to be fooled by a phishing attempt with a push-based second factor as mentioned in the original article, but, given a location for the login attempt, it is at least possible to notice that the attempt didn’t come from where you expected. A mismatch in the location of the phishing server and the authenticating user is likely to be the case in many less sophisticated phishing attacks. (Full disclosure: I previously worked for Duo.)

Lastly, we have the call for more data from larger organizations about the effectiveness of 2FA against phishing attacks. I’m on board with this and wish larger organizations like Google or Facebook’s security team were more transparent about results here. That said, the existence of an attack against specific 2FA methods does not equate to proof that 2FA doesn’t work at all. I worry about people not well educated in computer security reading critical articles like this and concluding 2FA isn’t worth the hassle. I’m not arguing that we shouldn’t question our current best practices but that when it comes to security discussions consumed by the general public, we have to be well balanced because there is a real risk.

Frequently when processing data, it’s useful to slice it by a certain dimension. It can quickly answer all sorts of questions and help

Some examples of the types of questions it might answer are:

...and endless others. It’s one of the most frequent tools I reach for whether I’m debugging, refactoring, designing a new feature, or architecting a new system. Being able to look at the same data from multiple angles is invaluable. Programmers aggregate data like this all the time in database queries and on the command line.

An infrequently mentioned function called groupby in the itertools module does just that for iterables in Python. One frustration with the standard library’s particular implementation that might not be obvious is that the data likely needs to be sorted first. Quoting the docs:

The operation of groupby() is similar to the uniq filter in Unix. It generates a break or new group every time the value of the key function changes (which is why it is usually necessary to have sorted the data using the same key function). That behavior differs from SQL’s GROUP BY which aggregates common elements regardless of their input order.

This isn’t always convenient and doesn’t seem like it should be required in most cases. Fortunately, with the help of collections.defaultdict we can build a grouping function that doesn’t have this requirement:

import collections

def group_by(iterable, key_fn):

"""

>>> def is_odd(num):

... return num % 2 != 0

...

>>> group_by(range(10), is_odd)

defaultdict(<class ’list’>, {False: [0, 2, 4, 6, 8], True: [1, 3, 5, 7, 9]})

"""

result = collections.defaultdict(list)

for item in iterable:

result[key_fn(item)].append(item)

return resultThe primary tradeoff of this version when compared to the standard library is that the input iterable has to be finite. The benefit of being able to assume pre-sorted data is that you can build an iterator over intermediate results, by each key type. Most often I already have the complete set of data so this isn’t a concern for me but it is a consideration.

This isn’t always the tool you want. In particular, your datastore is probably faster at grouping things before returning results than the Python VM is. However, data isn’t always already in a datastore - it can also come from files, input streams, the network, whatever. Go forth and group things!

A few weeks ago I had occasion to chunk a potentially large list of items into smaller lists for processing in batches in a Python codebase. While I remembered that itertools didn’t have such functionality built-in, it did have a recipe to do so, called `grouper`. For my use case, this recipe worked fine so I used it but it got me wondering about why Python didn’t provide what seems to be a fairly common operation (in another codebase I’d had occasion to do this a lot and we had a helper function for it). This led me down two paths.

One was to gut check that I wasn’t crazy for thinking this seemed to be a valid standard library function. I spot checked a few "batteries included" languages that I have some familiarity with (Ruby and Rust) and each have at least one built-in solution for this. Even C++ is considering including a solution in the standard via the Ranges-V3 proposal but that isn’t a surprise given the breadth of language features and library functions (particularly around containers) that C++ has.

The other path was to check out the history for why this hadn’t been included in Python. That lead me to a thread on the Python mailing lists](https://mail.python.org/pipermail/python-dev/2012-June/120781.html)) and a Python issue). While it would be easy to summarize my (outside) view of the reason for not including a standard function as "bikeshedding", the discussions did bring up a number of regarding the interface and implementation.

Some of these tradeoffs:

At the end of the day, I filed this under one of the oddities of Python and moved on with the task at hand but thought it would be interesting to share. There simply isn’t consensus over what constitutes the right chunking helper function for the Python standard library, nor does there appear to be any interest in figuring out the sticking points. FWIW one of the biggest sticking points seem to be around what to do with an off-size chunk at the end but I find myself in agreement with the original mailing list poster that this isn’t a huge deal and callers can handle this quite easily if it were a concern.

For kicks, I threw together a few additional chunking functions, each with a slightly different implementation:

import itertools

def chunk(iterable, n=1):

"""

Returns an iterable of lists of at most length n. Does not add filler

values if not enough input values for a given chunk.

>>> list(chunk(range(6), n=2))

[[0, 1], [2, 3], [4, 5]]

>>> list(chunk(range(6), n=4))

[[0, 1, 2, 3], [4, 5]]

"""

it = iter(iterable)

current = 0

next_chunk = []

while True:

while current < n:

try:

next_chunk.append(next(it))

current += 1

except StopIteration:

if next_chunk:

yield next_chunk

raise StopIteration()

yield next_chunk

current = 0

next_chunk = []

def chunk2(lst, n=1):

"""

Chunks an input list by size n using slices.

>>> list(chunk2(list(range(6)), n=2))

[[0, 1], [2, 3], [4, 5]]

>>> list(chunk2(list(range(6)), n=4))

[[0, 1, 2, 3], [4, 5]]

>>> list(chunk2(list(range(4)), n=5))

[[0, 1, 2, 3]]

"""

last_used = 0

while last_used < len(lst) - 1:

chunk = lst[last_used:last_used + n]

last_used += n

yield chunk

raise StopIteration()

def chunk3(iterable, n=1):

"""

Chunks any iterable by size n using islice.

>>> list(chunk3(range(6), n=2))

[[0, 1], [2, 3], [4, 5]]

>>> list(chunk3(range(6), n=4))

[[0, 1, 2, 3], [4, 5]]

>>> list(chunk3(range(4), n=5))

[[0, 1, 2, 3]]

"""

last_used = 0

while True:

chunk = itertools.islice(iterable, last_used, last_used + n)

last_used += n

if chunk:

yield chunk

if len(chunk) != n:

raise StopIteration()Inspired by a tweet from @munificentbob) I recently read this paper about Lua. I took some notes while reading and though I’d dump them here. Some of the implementation decisions and data structures were pretty interesting to me. These notes are pretty raw and only a little bit cleaned up from my handwritten shorthand.

Many things that were very interesting to me in the paper but these were some of the highlights (I have some notes on all of these below):

I don’t have very much experience with building interpreters or compilers - an undergrad compilers class and a fair bit of reading about CPython implementation details - but still found this paper very readable. It touches on concepts at a high level and seems to have fairly good references for deeper reading if you want to learn more about a given topic. With all that said, here are the notes I took:

Lua does not have arrays, only tables (hash tables)

- Lua 5 can recognize tables used as arrays and back these w/ an array for efficiencyEfficiency: fast compilation and execution of Lua programs

- fast, smart, one-pass compiler and fast VMThe compiler is 30% of the size of Lua core

- It is possible to embed Lua without the compiler and provide pre-compiled programsThe scanner and parsers are hand written

- smaller and more portable than yacc-generated code

- used yacc until 3.0Compiler uses no IR

- still performs some optimization (although the paper didn’t cover this afaik)For portability, cannot use Direct Threaded code. See refs [8, 16]

- Uses while-switch dispatch loop

- Complicated implementation sometimes for portabilityEight types: nil, boolean, number, string, table, function, user data, thread

- Numbers are doubles

- Strings are arrays of bytes with explicit sizes

- Tables are associative arrays

- Userdata: blocks of memory (pointers)

- - light (memory managed by user) and heavy (garbage collection)

- threads are coroutinesTagged union used to represent types

- copying is expensive (3-4 words) because of thisNeeded for portability, can’t use tricks that some languages (e.g. smalltalk) use to embed type info in spare bits.

- (because byte alignment differences on various platforms)Tables are backed by both a hash table and by arrays

- keys like strings end up in hash table

- Numeric keys from 1 onward are not stored, values stored in the arrayThe backing array has a size limit N

- goal for at least 1/2 of N to be used

- "largest N such that at least half of the slots between 1 and N are in use and at least one used slot between N/2 + 1 and N"

- Access to the array backed keys is faster because no hashing and takes half the memory (due to not storing keys).

- Hash part is chained scatter table w/ Brent’s Variation (Ref [3])

- Another paper for me to read

A closure has a reference to its Prototype, environment (e.g. global vars), and an array of references to Upvalues

- Upvalues used to access outer local variablesFunction parameters in Lua are local variables

When the variable goes out of scope, it migrates into a slot in the up value itself

- (So a copy is only incurred when needed)When a new closure is created, the runtime goes through all outer locals and sees if the variable is already an Upvalue in the linked list.

- If found, reuse, otherwise create a new Upvalue.List search typically probes only a few nodes because the list contains at most one entry for each local variable that is used by a nested function.

- Question: Why a linked list? Is the mutation and traversal here infrequent enough to avoid allocation and pointer indirection overhead? Why not normal array or some other structure?create function receives a main function and creates a new coroutine with that function. Returns a value of type thread that represents that coroutine.resume (re)starts execution, calling the main function that was provided.yield suspends execution and returns control to the call that resumed that coroutine.resume/yield correspond to recursive calls/returns of the Lua interpreter function, using the C stack to track the Lua stack for coroutines.

- This implies the interpreter is able to run multiple times, recursively, within the same process without issue.

- The closure implementation helps here by avoiding issues with locals going out of scope.Two common problems with register-based machines are code size and decoding overhead:

- Instructions have to specify operands so most instructions are larger than instructions on a stack based machine because of implicit operands.

- The paper says instructions are typically 4 bytes vs 1-2 bytes of previous stack-based implementation but that many fewer instructions are emitted for common operations so code size isn’t significantly larger.

- There is overhead in decoding operands from register machine instructions compared to implicit operands of stack machines.

- Due to machine alignment and efficient use of logical operations for decoding, this is still fairly cheap.Instructions are 32 bits divided into three or four fields.

- Operations are 6 bits, providing 64 possible opcodes

- Field A is always 8 bits.

- Fields B and C take 9 bits each or can be combined into an 18 bit field (Bx is unsigned or SBx is signed).

- Most instructions use three address format, A is the register for the result, B and C point to the operands.

- Operands are either a register or a constant.

- This makes typical Lua operations (like attribute access) take only one instruction.Branching post a problem so the VM uses two instructions:

- The branch instruction itself, and alum that should be done if the test succeeds.

- The jump is skipped if it fails.

- This jump is fetched and executed within the same dispatch cycle as the branch so it is something of a special case within the VM.Lua uses two parallel stacks for function calls:

- One stack has one entry for each active function and stores the function, return address, and base index for the activation record.

- The other is a large array of Lua values that keeps the activation records.

- each activation record has all temporary values (params, locals)I wrote my own URL shortener.

That statement might seem surprising at first but I built it for a very specific purpose: I keep a notebook as a cross between a planner, todo list, and scratch paper to keep notes. I use a modified version of the Bullet Journal system and wanted a way to link notes on paper to the webpages I needed to refer to for notes, ideas, and todo items. This was the core of the technical requirements but there were philosophical reasons for building my own URL shortener as well.

I wanted a URL shortener that provided as short of a URL as possible so that I could write it down on paper in my notebooks. Publicly available shortener services and open source projects generally produce fairly lengthy URLs when you’re considering hand writing them and so I came up with my own key space based on my expected usage. I’ll get to that in a moment. The philosophic reasons alluded to before were around data longevity and ownership. I go through approximately two notebooks each year and have been using this method of organization for my life and work for a few years already and don’t see that changing any time soon.

I was concerned with being able to export all URLs I’ve shortened with their keys in the case that whatever service I chose went offline. This is something I looked into before embarking on implementing my own shortener but couldn’t find anything that quelled my concerns so I spent a couple hours one weekend to get the basics implemented.

As mentioned before, I wanted to make the short URLs as easy to write down as possible. I considered the simple approach of incrementing an integer key but quickly moved on. At first it would work very nicely but it would rather quickly reach four-digit keys, even with a low rate of use of 1-3x per day.

Instead, I considered a key space similar to that of base 64 encoding. I didn’t want to deal with non-alphanumeric characters though so I used [a-zA-Z0-9] which ends up being 62 characters. Next I considered how long each key would have to be so that all URLs could be of the same length. Two character keys (62^2 or 3,844) might have lasted a few years but would have eventually run out. Three character keys (62^3 or 238,328) seemed likely to cover me for as long as I wanted since my usage pattern would be manually generated short URLs on demand and fairly infrequently at that.

There are three main components of my URL shortener: The input endpoint that shortens URLs, the lookup endpoint that turns a short URL into the real deal, and the short code generator.